Artificial intelligence is no longer experimental it’s foundational. From fintech and healthcare to retail and manufacturing, organizations are embedding machine learning into core business processes. Fraud detection systems analyze transactions in milliseconds.

Recommendation engines personalize user experiences in real time. Predictive maintenance models reduce downtime in industrial environments. AI is driving efficiency, automation, and competitive advantage at scale.

MLOps as a Service: Rapid AI Adoption Across Industries

Cloud platforms like AWS, Google Cloud, and Microsoft Azure have made machine learning infrastructure more accessible than ever. Prebuilt APIs, scalable storage, and GPU-enabled environments have lowered the barrier to entry for startups and enterprises alike.

But while AI adoption is accelerating, operational maturity is not keeping pace.

Building a machine learning model in a notebook is one thing. Running it reliably in production handling live data, monitoring performance, retraining models, and ensuring compliance is something entirely different.

This is where the real complexity begins.

The Gap Between Building Models and Deploying Them

Data science teams are often highly effective at experimentation. They build models, test hypotheses, and achieve promising accuracy metrics. However, many organizations struggle to move from prototype to production.

This gap between experimentation and deployment is one of the biggest AI deployment challenges companies face today.

Here’s why:

Models are built in isolated environments.

Data pipelines are inconsistent or manual.

There is no version control for models or datasets.

Deployment processes lack automation.

Monitoring systems are either incomplete or nonexistent.

As a result, promising machine learning initiatives stall. Models that perform well in development fail in real-world conditions. Data drifts. Performance degrades. And teams spend more time fixing pipelines than delivering value.

Without structured ML operations, AI initiatives become fragile, expensive, and difficult to scale.

The Operational Challenges of Scaling ML Systems

Once a model goes live, the work doesn’t stop it multiplies.

Scaling machine learning systems introduces new layers of complexity:

1. Data Drift and Model Degradation

Real-world data constantly changes. Customer behavior shifts. Market conditions evolve. If models aren’t monitored and retrained regularly, their accuracy declines over time.

2. Infrastructure Management

Production ML systems require robust compute resources, scalable APIs, secure data pipelines, and orchestration tools. Managing this infrastructure internally demands specialized DevOps and ML engineering expertise.

3. Reproducibility and Versioning

Teams must track:

Dataset versions

Feature transformations

Model parameters

Training environments

Without proper ML operations practices, reproducing results becomes nearly impossible.

4. Compliance and Governance

Industries such as healthcare and finance must ensure auditability, traceability, and data security. Operationalizing AI without governance frameworks increases risk.

5. Collaboration Gaps

Data scientists, DevOps engineers, and software teams often operate in silos. Misalignment slows deployment and increases errors.

These challenges explain why many organizations invest heavily in AI but struggle to achieve consistent ROI.

MLOps as a Service (MLOpsaaS): The Solution

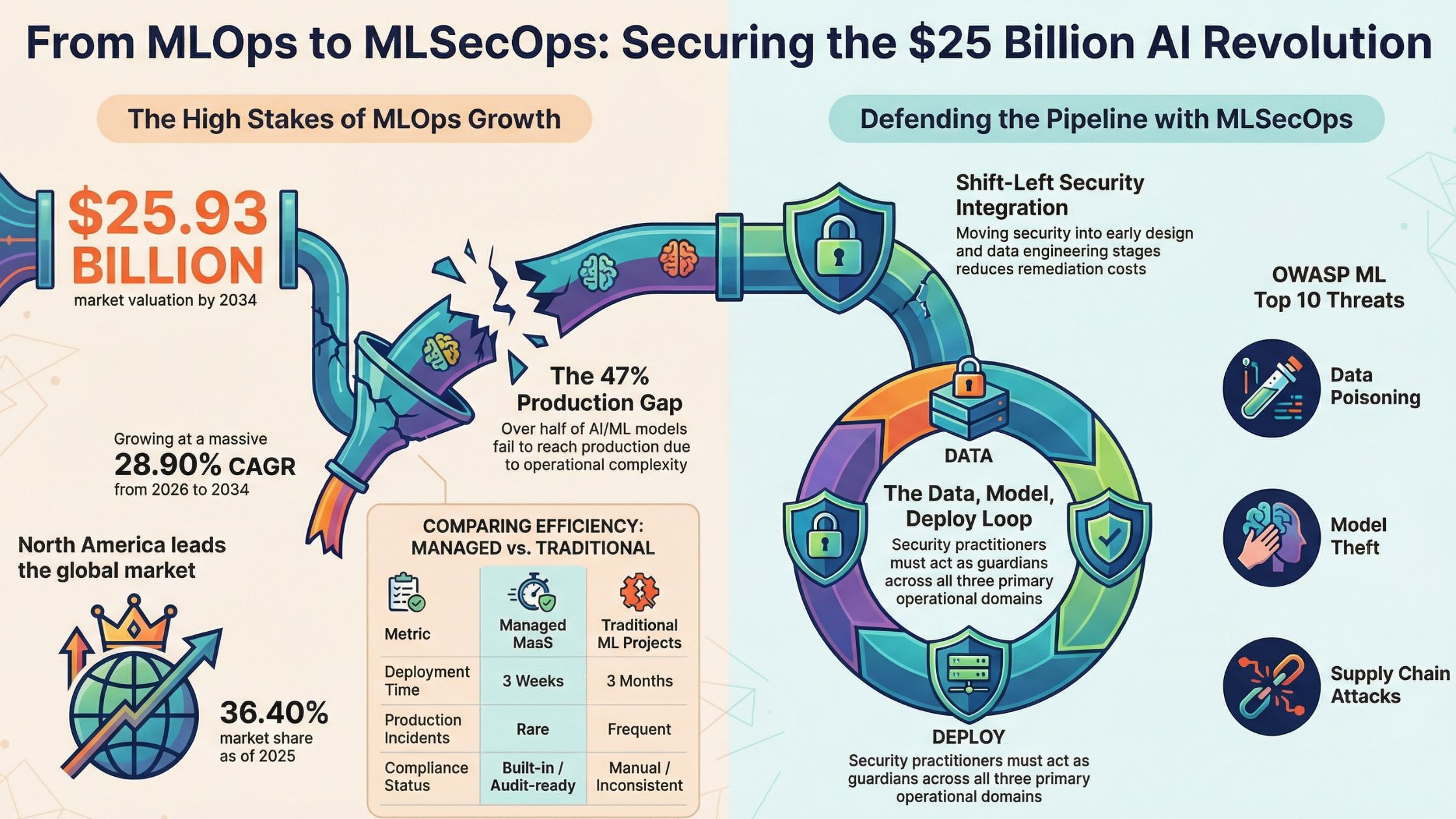

To address these AI deployment challenges, organizations are turning to MLOps as a Service (MLOpsaaS).

MLOps as a Service is a managed approach to ML operations that provides the infrastructure, automation, monitoring, and lifecycle management required to run machine learning systems at scale. Instead of building complex pipelines internally, companies leverage managed MLOps platforms and expert teams to operationalize AI efficiently.

With managed MLOps, organizations gain:

Automated ML pipelines

CI/CD for machine learning

Scalable deployment infrastructure

Continuous model monitoring

Drift detection and automated retraining

Governance and compliance support

This approach bridges the gap between experimentation and production. It ensures that machine learning models are not just built but maintained, monitored, and optimized continuously.

As AI becomes mission-critical, operational excellence becomes non-negotiable. MLOps as a Service enables businesses to focus on innovation while experts handle the complexity of ML operations.

In today’s AI-driven landscape, success is no longer about building models. It’s about deploying and scaling them reliably and that’s exactly what managed MLOps is designed to do.

2. What Is MLOps?

Definition of MLOps (Machine Learning Operations)

MLOps (Machine Learning Operations) is a set of practices, tools, and cultural principles that standardize and automate the end-to-end lifecycle of machine learning models from data preparation and model training to deployment, monitoring, and continuous improvement.

In simple terms, MLOps brings operational discipline to machine learning. Just as DevOps transformed software development by enabling continuous integration and deployment, ML operations ensures that AI systems can move from experimentation to reliable, scalable production environments.

Without MLOps, machine learning projects often remain stuck in research notebooks. With MLOps, models become production-ready systems that deliver measurable business value.

How MLOps Combines DevOps, Data Engineering, and Machine Learning

MLOps sits at the intersection of three major disciplines:

1. DevOps

DevOps focuses on automating software development and deployment pipelines. MLOps adopts DevOps principles such as:

Continuous Integration (CI)

Continuous Deployment (CD)

Infrastructure as Code

Automated testing

However, ML systems are more complex than traditional software because they depend on data and model behavior not just code.

2. Data Engineering

Machine learning systems rely heavily on data pipelines. Data engineering ensures:

Reliable data ingestion

Data cleaning and validation

Feature engineering

Storage and transformation

If the data pipeline breaks, the model fails regardless of how well it was trained.

3. Machine Learning

The ML layer involves:

Model experimentation

Hyperparameter tuning

Evaluation metrics

Algorithm selection

MLOps connects all three domains into a unified, automated workflow that ensures machine learning models are reproducible, scalable, and production-ready.

Core Goals of MLOps

A strong ML operations framework is built around four primary goals:

1. Automation

Manual processes slow down AI deployment and introduce errors. MLOps automates:

Data preprocessing

Model training

Testing

Deployment workflows

Retraining triggers

Automation accelerates time-to-production and reduces operational overhead.

2. Reproducibility

Every model version should be reproducible. This means tracking:

Data versions

Feature transformations

Model parameters

Training environments

Reproducibility ensures teams can audit results and rebuild models consistently.

3. Scalability

As usage grows, models must handle increasing data volume and user requests. MLOps enables:

Horizontal scaling of inference APIs

Distributed training environments

Elastic cloud infrastructure

Scalability ensures performance does not degrade under load.

4. Monitoring

Machine learning models are dynamic systems. Their performance can degrade over time due to data drift or changing patterns. MLOps implements:

Real-time performance tracking

Drift detection

Alert systems

Automated retraining workflows

Monitoring keeps AI systems accurate and reliable.

Key Components of MLOps

A mature MLOps framework consists of several interconnected components that manage the entire ML lifecycle.

1. Data Pipelines

Data pipelines are the foundation of ML operations. They handle:

Data ingestion from multiple sources

Data cleaning and transformation

Feature engineering

Data validation and quality checks

Reliable pipelines ensure consistent and trustworthy input for models. Automated data workflows prevent bottlenecks and reduce manual intervention.

2. Model Training

The model training phase involves:

Experiment tracking

Hyperparameter tuning

Performance evaluation

Model versioning

MLOps platforms often integrate experiment tracking tools to log metrics, configurations, and artifacts. This ensures transparency and comparability between model versions.

3. CI/CD for Machine Learning

Continuous Integration and Continuous Deployment (CI/CD) in ML extends traditional DevOps pipelines to include models and data.

CI/CD for ML typically includes:

Automated testing of data and models

Validation of training pipelines

Automated model packaging

Deployment to staging and production environments

Unlike standard software CI/CD, ML pipelines must also validate datasets and performance metrics before deployment.

4. Model Deployment

Deployment transforms a trained model into a live service.

Common deployment approaches include:

REST API endpoints for real-time inference

Batch processing systems

Edge deployments

Containerized environments (e.g., Docker + Kubernetes)

MLOps ensures deployment is repeatable, scalable, and secure.

5. Monitoring and Retraining

Once deployed, models must be continuously monitored.

Monitoring includes:

Prediction accuracy

Latency and throughput

Data drift detection

Model drift detection

Resource utilization

If performance drops below a threshold, automated retraining pipelines can be triggered using updated data.

This continuous feedback loop is what makes MLOps essential for long-term AI success.

Why MLOps Is Critical for Modern AI Systems

Machine learning models are not static assets they are living systems that interact with evolving data. Without structured ML operations, organizations face deployment delays, performance degradation, and governance risks.

MLOps transforms machine learning from an experimental initiative into a reliable, production-grade capability.

It ensures that AI doesn’t just work in development it works consistently in the real world.3. What Is MLOps as a Service?

Definition of MLOps as a Managed Cloud Service

MLOps as a Service (MLOpsaaS) is a managed cloud-based solution that provides end-to-end machine learning lifecycle management including infrastructure, automation, deployment, monitoring, and governance without requiring organizations to build and maintain their own ML operations stack.

Instead of assembling tools, configuring pipelines, and hiring specialized ML engineers internally, businesses rely on a managed MLOps provider to handle the operational complexity of production AI systems.

In simple terms:

MLOps builds the system.

MLOps as a Service runs and manages it for you.

This model allows companies to focus on building models and delivering business value while experts manage the underlying ML infrastructure services.

How MLOps as a Service Differs from In-House MLOps

While traditional ML operations requires internal teams to design and maintain infrastructure, outsourced MLOps shifts responsibility to a specialized service provider.

Here’s how they differ:

Infrastructure Setup

In-House MLOps: Infrastructure is built from scratch, including cloud architecture, CI/CD pipelines, monitoring systems, and model registries.

MLOps as a Service: Prebuilt, cloud-native infrastructure is already configured and managed by the provider.

Expertise Required

In-House MLOps: Requires hiring and managing a team of ML engineers, DevOps engineers, data engineers, and platform specialists.

MLOps as a Service: Technical expertise is provided by the vendor, reducing the need for internal specialized teams.

Maintenance

In-House MLOps: Continuous internal effort is needed for updates, bug fixes, scaling, security patches, and infrastructure optimization.

MLOps as a Service: Fully managed by the service provider, including upgrades, monitoring, and optimization.

Scalability

In-House MLOps: Scaling depends on internal infrastructure capacity and engineering bandwidth.

MLOps as a Service: Elastic cloud scaling allows automatic resource adjustment based on workload demand.

Cost Structure

In-House MLOps: Requires high upfront investment in infrastructure, hiring, and long-term operational costs.

MLOps as a Service: Subscription-based or usage-based pricing model with predictable operational expenses.

Building in-house MLOps often requires:

Dedicated ML platform engineers

DevOps specialists

Data engineers

Ongoing infrastructure maintenance

MLOps as a Service eliminates the need to build and maintain this ecosystem internally.

Managed Infrastructure, Tooling, and Monitoring

A comprehensive managed MLOps solution typically includes:

1. Infrastructure Management

Cloud-based compute and storage

GPU-enabled environments

Container orchestration

Automated scaling

2. Tooling Integration

Experiment tracking

Model registry

CI/CD pipelines for ML

Data validation frameworks

3. Continuous Monitoring

Model performance tracking

Data drift detection

System health monitoring

Automated alerts and retraining triggers

Leading cloud platforms such as AWS SageMaker, Google Vertex AI, and Azure Machine Learning offer managed ML infrastructure services that support MLOps capabilities. However, many organizations also work with specialized outsourced MLOps providers for customized implementations.

Subscription-Based and Scalable Model

One of the biggest advantages of MLOps as a Service is its pricing and scalability model.

Instead of investing heavily in:

On-premise infrastructure

Platform engineering teams

Long-term DevOps overhead

Organizations pay a subscription fee based on usage compute, storage, API calls, or service tiers. This pay-as-you-scale approach makes managed MLOps particularly attractive for:

Startups launching AI features

Enterprises scaling multiple ML models

Companies transitioning from pilot to production

It aligns costs directly with AI growth.

4. Why Companies Need MLOps as a Service

As AI adoption increases, operational complexity grows exponentially. Many companies realize that building models is easy running them reliably at scale is not.

Here’s why MLOps as a Service has become essential.

4.1 Faster Time to Production

One of the biggest AI deployment challenges is the delay between model development and production deployment.

Without structured ML operations:

Data pipelines break

Manual deployment processes slow releases

Integration issues cause rework

Monitoring systems are incomplete

Managed MLOps reduces these bottlenecks by providing:

Prebuilt infrastructure

Automated CI/CD pipelines

Standardized workflows

Proven deployment frameworks

This accelerates time-to-market and allows teams to ship AI-powered features faster.

For competitive industries, speed matters.

4.2 Lower Infrastructure Costs

Building internal ML operations is expensive.

Organizations must hire:

ML engineers

DevOps engineers

Data engineers

Cloud architects

They must also maintain:

Compute clusters

Storage systems

Monitoring infrastructure

Security layers

MLOps as a Service eliminates much of this overhead. Instead of building a dedicated ML platform team, companies leverage outsourced MLOps expertise.

The subscription-based pricing model ensures businesses only pay for what they use. This significantly reduces capital expenditure and shifts costs to predictable operational expenses.

For startups and mid-sized companies, this cost efficiency can be transformative.

4.3 Improved Model Reliability

Machine learning models degrade over time due to changing data patterns. Without continuous monitoring, accuracy drops and business performance suffers.

Managed MLOps ensures:

Real-time performance tracking

Drift detection systems

Automated retraining workflows

Version-controlled model updates

Continuous monitoring transforms AI systems into adaptive systems. Instead of failing silently, models are constantly evaluated and optimized.

This reliability is critical for use cases such as:

Fraud detection

Credit scoring

Healthcare diagnostics

Demand forecasting

AI systems must be trustworthy and ML operations ensures they remain so.

4.4 Security and Compliance

AI systems often process sensitive data. Without structured governance, organizations face security risks and compliance violations.

MLOps as a Service provides:

Secure data pipelines

Role-based access control

Encryption at rest and in transit

Audit trails and logging

Governance frameworks

For regulated industries, managed ML infrastructure services reduce risk by implementing standardized compliance protocols.

Security is no longer optional it’s foundational.

The Strategic Advantage of Managed MLOps

As AI becomes core to business strategy, operational excellence becomes a competitive differentiator.

MLOps as a Service enables organizations to:

Deploy faster

Scale efficiently

Reduce operational complexity

Maintain model accuracy

Ensure compliance

Rather than treating ML operations as an internal engineering burden, companies can leverage outsourced MLOps expertise to accelerate innovation.

In a world where AI adoption is accelerating, the ability to operationalize machine learning reliably may determine which organizations lead and which fall behind.

5. Core Components of MLOps as a Service

A robust MLOps as a Service solution provides a fully managed framework that handles every stage of the machine learning lifecycle. From raw data ingestion to automated retraining, each component is designed to ensure scalability, reliability, and operational efficiency.

Here’s a breakdown of the core components that power managed MLOps platforms.

5.1 Data Engineering & Pipeline Automation

Data is the foundation of every machine learning system. Without reliable data pipelines, even the most sophisticated models fail.

Data Ingestion

Managed MLOps platforms automate data ingestion from multiple sources such as:

Databases

APIs

Cloud storage

Streaming systems

This ensures real-time and batch data flows are consistent and production-ready.

Data Validation

Data quality directly impacts model accuracy. MLOps as a Service includes:

Schema validation

Anomaly detection

Missing value checks

Consistency monitoring

Automated validation prevents corrupted or incomplete datasets from entering training pipelines.

Feature Engineering

Feature engineering transforms raw data into meaningful inputs for models. Managed ML infrastructure services often provide:

Feature stores

Reusable feature pipelines

Version-controlled transformations

This ensures consistency between training and production environments.

5.2 Model Training & Experiment Tracking

Training models in production environments requires structure and reproducibility.

Hyperparameter Tuning

MLOps as a Service automates hyperparameter optimization using distributed training environments. This improves model performance without manual trial-and-error processes.

Version Control

Every experiment is tracked, including:

Model versions

Dataset versions

Code changes

Training configurations

Version control ensures traceability and rollback capabilities if performance issues arise.

Reproducibility

Reproducibility is critical for debugging and compliance. Managed MLOps platforms log:

Training environments

Dependencies

Random seeds

Evaluation metrics

This guarantees that models can be rebuilt exactly as they were originally trained.

5.3 Model Deployment & Serving

Deploying a model into production transforms it into a live business system.

Real-Time APIs

Models can be deployed as REST or gRPC APIs to support real-time predictions for applications such as fraud detection, recommendation engines, and chatbots.

Batch Processing

For use cases like forecasting or large-scale data analysis, batch inference pipelines process data at scheduled intervals.

Containerization (Docker & Kubernetes)

Modern ML deployment relies heavily on containerization tools like:

Docker

Kubernetes

Containers ensure consistency across environments and enable horizontal scaling in cloud infrastructure.

Managed MLOps services handle orchestration, scaling, and uptime reducing operational risk.

5.4 Continuous Monitoring & Observability

Machine learning models degrade over time. Continuous monitoring ensures performance remains stable after deployment.

Performance Metrics

Managed platforms track:

Accuracy

Precision and recall

Latency

Throughput

Resource utilization

These metrics provide real-time visibility into model health.

Data Drift Detection

Data distributions change due to shifting user behavior, market conditions, or seasonality. Drift detection systems identify when incoming data deviates from training data patterns.

Model Degradation Alerts

If performance drops below predefined thresholds, automated alerts notify teams. In advanced systems, retraining workflows can be triggered automatically.

Observability ensures AI systems remain trustworthy and production-ready.

5.5 Automated Retraining & Lifecycle Management

MLOps as a Service doesn’t just deploy models it manages their entire lifecycle.

Scheduled Retraining

Models can be retrained at fixed intervals (daily, weekly, monthly) to maintain accuracy as new data becomes available.

Trigger-Based Retraining

Retraining can also be triggered automatically when:

Drift is detected

Performance metrics decline

Data thresholds are exceeded

This creates a self-healing ML system.

Model Registry

A centralized model registry stores:

Approved models

Version histories

Metadata

Deployment status

This ensures proper governance, auditability, and rollback capabilities.

Why These Components Matter

Together, these components form a complete, managed ML operations ecosystem. Instead of fragmented tools and manual processes, organizations get an integrated system that supports:

Faster deployment

Improved reliability

Scalable infrastructure

Continuous optimization

By combining automated pipelines, monitoring, and lifecycle management, MLOps as a Service transforms machine learning from a one-time experiment into a continuously evolving production system.

6. How MLOps as a Service Works (Step-by-Step)

Understanding how MLOps as a Service works helps organizations see how managed ML operations turn experimental models into scalable, production-ready AI systems. Below is a structured, step-by-step breakdown of the typical workflow followed by managed MLOps providers.

Step 1: Business Requirement Gathering

Every successful ML initiative starts with clarity.

At this stage, the MLOps provider collaborates with stakeholders to define:

Business objectives

Success metrics (KPIs)

Model performance benchmarks

Compliance requirements

Integration needs

Instead of focusing only on algorithms, this phase ensures the machine learning solution aligns with measurable business outcomes.

For example:

Fraud detection → Reduce false positives by 20%

Demand forecasting → Improve forecast accuracy by 15%

Recommendation engine → Increase conversion rates

Clear goals prevent over-engineering and ensure the ML operations pipeline is designed for impact.

Step 2: Data Assessment & Preparation

Once objectives are defined, the next step is evaluating the available data.

This includes:

Data source identification

Data quality assessment

Gap analysis

Privacy and compliance review

Feature identification

Managed MLOps teams often conduct data audits to detect:

Missing values

Bias issues

Data inconsistencies

Schema mismatches

After assessment, data pipelines are prepared for:

Cleaning

Transformation

Feature engineering

Versioning

This phase ensures the foundation of the ML system is reliable and production-ready.

Step 3: Infrastructure Setup

With data readiness confirmed, the provider configures scalable ML infrastructure.

This typically involves:

Cloud environment provisioning

Compute resource allocation (CPU/GPU)

Storage configuration

Access control implementation

Security policies setup

Infrastructure is designed to be:

Elastic (scales with demand)

Secure (encrypted and access-controlled)

Automated (Infrastructure as Code)

Because it’s managed MLOps, most of this setup uses prebuilt cloud-native templates to accelerate deployment and reduce configuration errors.

Step 4: Pipeline Creation

This is where ML operations truly come to life.

The provider builds automated pipelines for:

Data ingestion

Data validation

Feature engineering

Model training

Testing

Deployment

CI/CD pipelines are configured specifically for machine learning workflows.

Automation ensures that:

Code changes trigger retraining

New data updates trigger validation

Performance checks run before deployment

This eliminates manual bottlenecks and reduces human error.

Step 5: Model Training & Validation

Once pipelines are operational, models are trained using:

Clean, validated datasets

Version-controlled code

Experiment tracking tools

Validation includes:

Performance benchmarking

Bias and fairness checks

Stress testing

Cross-validation

Only models that meet predefined business and technical thresholds are approved for production.

This structured validation process reduces deployment risk and increases reliability.

Step 6: Deployment to Production

After approval, the model is deployed into a live environment.

Depending on the use case, deployment may include:

Real-time inference APIs

Batch processing systems

Microservices architecture

Containerized deployment

Managed MLOps ensures:

Zero-downtime deployments

Rollback capability

Version control

Secure endpoints

Deployment is no longer a one-time event it becomes part of a repeatable, automated workflow.

Step 7: Monitoring & Optimization

Deployment is not the end it’s the beginning of continuous improvement.

Managed MLOps platforms implement:

Real-time performance monitoring

Latency and throughput tracking

Data drift detection

Model drift analysis

Automated alert systems

If performance degrades, retraining workflows can be triggered automatically.

Optimization may include:

Hyperparameter retuning

Feature updates

Infrastructure scaling

Model replacement

This continuous feedback loop ensures AI systems remain accurate, efficient, and aligned with business goals.

The Continuous Lifecycle Advantage

Unlike traditional development cycles, machine learning systems require constant attention. MLOps as a Service transforms AI into a living, adaptive system rather than a static deployment.

The step-by-step process ensures:

Strategic alignment

Operational efficiency

Scalable infrastructure

Ongoing optimization

By automating the entire lifecycle from business requirement gathering to monitoring and retraining managed ML operations eliminate complexity and accelerate AI ROI.

In modern AI-driven enterprises, this structured approach is what separates experimental models from scalable, production-grade intelligence.

7. MLOps as a Service vs In-House MLOps (In Pointers)

Here’s a clear, point-by-point comparison between MLOps as a Service and In-House MLOps:

Infrastructure Setup

In-House MLOps: Infrastructure is designed and built internally from scratch, including cloud architecture, CI/CD pipelines, model registries, and monitoring systems.

MLOps as a Service: Infrastructure is prebuilt, cloud-native, and managed by the service provider.

Time to Deployment

In-House MLOps: Slower initial setup due to architecture design, tool selection, and team onboarding.

MLOps as a Service: Faster deployment using ready-made frameworks and automated workflows.

Expertise & Talent Requirements

In-House MLOps: Requires hiring ML engineers, DevOps specialists, platform engineers, and cloud architects.

MLOps as a Service: Vendor provides the required expertise, reducing internal hiring needs.

Cost Structure

In-House MLOps: High upfront investment in infrastructure, recruitment, training, and ongoing maintenance.

MLOps as a Service: Subscription-based or usage-based pricing with predictable operational expenses.

Scalability

In-House MLOps: Scaling depends on internal infrastructure capacity and engineering bandwidth.

MLOps as a Service: Elastic cloud scaling with automatic resource allocation based on demand.

Maintenance & Upgrades

In-House MLOps: Internal teams are responsible for updates, security patches, and system optimization.

MLOps as a Service: Fully managed by the provider, including upgrades and performance optimization.

Monitoring & Model Reliability

In-House MLOps: Monitoring systems must be built and maintained internally.

MLOps as a Service: Continuous monitoring, drift detection, and automated retraining are integrated.

Security & Compliance

In-House MLOps: Requires internal governance frameworks and compliance monitoring.

MLOps as a Service: Built-in security standards, access control, and compliance-ready infrastructure.

Operational Complexity

In-House MLOps: Higher complexity due to managing multiple tools, integrations, and workflows.

MLOps as a Service: Simplified operations through centralized and automated management.

Focus on Core Business

In-House MLOps: Significant time spent managing infrastructure rather than building models.

MLOps as a Service: Teams focus on innovation and model development while the provider handles ML operations.

8. How to Choose the Right MLOps as a Service Provider

Selecting the right MLOps as a Service partner can make or break your AI initiatives. Here are the key factors to consider when evaluating providers:

Cloud Compatibility

Ensure the provider supports your existing cloud environment (e.g., AWS, Azure, Google Cloud).

Look for seamless integration with your data storage, compute, and identity services.

Multi-cloud or hybrid support is a plus for flexibility.

Security Standards

Verify that the platform enforces secure data pipelines, encryption at rest and in transit, and role-based access control (RBAC).

Review compliance certifications (e.g., SOC 2, ISO 27001, GDPR).

Check how the provider handles audit logs, authentication, and key management.

Monitoring Capabilities

Strong monitoring is critical for production ML systems.

Look for real-time performance tracking, alerting, drift detection, and observability dashboards.

Advanced providers offer automated retraining triggers based on performance thresholds.

Pricing Model

Understand the pricing structure: subscription vs usage-based vs tiered plans.

Assess cost predictability and scalability as your ML workload grows.

Watch out for hidden costs like data egress, pipeline runs, or additional modules.

Support & SLAs

Evaluate the level of technical support offered (e.g., dedicated support engineers, response times, business hours vs 24/7).

Look for clear Service Level Agreements (SLAs) that guarantee uptime, issue response times, and escalation paths.

Check for training resources, onboarding assistance, and community or professional support channels.

Customization Flexibility

Your business may have unique workflows, compliance needs, or tooling preferences.

Choose a provider that allows customization of pipelines, model deployment patterns, observability metrics, and deployment targets.

Flexibility ensures that the platform adapts to your use cases not the other way around.

Ready to Accelerate Your AI with Expert MLOps Support?

If you’re evaluating managed MLOps options and want a partner that ticks all the boxes from secure, scalable ML infrastructure services to customizable automation and dedicated support Advant AI Labs can help.

Advant AI Labs specializes in delivering enterprise-grade MLOps as a Service, combining:

Cloud-agnostic architecture

Robust monitoring & retraining automation

Security & compliance aligned with industry standards

Flexible pricing and customized solutions

Expert support and onboarding

👉 Talk to Advant AI Labs today to streamline your ML operations and scale your AI with confidence.

Read More: How to Measure DevOps Success: Key Metrics, KPIs, and Best Practices

FAQs

1. What is MLOps as a Service?

Answer: MLOps as a Service is a managed cloud solution that provides end-to-end ML operations, including data pipeline automation, model training, deployment, monitoring, and retraining. Instead of building internal ML infrastructure, organizations rely on a third-party provider to manage the entire machine learning lifecycle.

It combines automation, scalability, and governance to ensure machine learning models move from experimentation to reliable production environments.

2. How is MLOps different from DevOps?

Answer: While DevOps focuses on automating and streamlining software development and deployment, MLOps (Machine Learning Operations) extends those principles to machine learning systems.

Key differences include:

MLOps manages both code and data dependencies.

It includes model versioning, experiment tracking, and drift detection.

It supports automated retraining workflows.

It monitors model performance after deployment.

In short, DevOps optimizes software delivery, whereas ML operations optimizes the entire machine learning lifecycle.

3. Is MLOps as a Service suitable for startups?

Answer: Yes, MLOps as a Service is highly suitable for startups.

Startups often lack:

Dedicated ML platform teams

Large infrastructure budgets

Specialized DevOps resources

A managed MLOps solution allows startups to:

Deploy AI products faster

Avoid heavy upfront infrastructure costs

Scale as usage grows

Focus on product innovation rather than infrastructure management

For early-stage companies building AI-powered products, outsourced MLOps can significantly accelerate time-to-market.

4. How much does MLOps as a Service cost?

Answer: The cost of MLOps as a Service varies depending on:

Cloud usage (compute, storage, GPUs)

Number of deployed models

Data volume

Monitoring and retraining frequency

Service tier and support level

Most providers offer subscription-based or usage-based pricing models. This allows businesses to align costs with growth and avoid high upfront investments typically required for in-house ML infrastructure services.

5. What tools are used in MLOps?

Answer: MLOps environments commonly use a combination of open-source and cloud-native tools, including:

Containerization: Docker

Orchestration: Kubernetes

Managed ML platforms: AWS SageMaker, Google Vertex AI, Azure Machine Learning

Workflow orchestration: Kubeflow

Managed MLOps providers typically integrate these tools into a unified, automated system.

15. Conclusion

Artificial intelligence without operational maturity often fails.

Many organizations successfully build machine learning models but struggle to deploy, monitor, scale, and maintain them in production. Without structured ML operations, models degrade, pipelines break, and ROI diminishes.

MLOps as a Service bridges this gap.

It transforms experimental AI initiatives into scalable, secure, and production-ready systems by providing:

Automated ML pipelines

Continuous monitoring and drift detection

Secure, compliant infrastructure

Scalable cloud deployment

Ongoing lifecycle management

As AI adoption accelerates across industries, operational excellence becomes a competitive advantage. Businesses that invest in managed MLOps gain faster time-to-market, improved reliability, and long-term scalability.