Generative AI has quickly shifted from a novelty to a core competitive advantage. Businesses are no longer just experimenting with AI they’re embedding it into products, workflows, and customer experiences. But as adoption accelerates, a critical challenge emerges: how do you know if your AI is actually better than your competitors?

This is where competitive benchmarking for generative AI becomes essential.

Unlike traditional software, generative AI systems don’t produce fixed, predictable outputs. Their performance varies based on prompts, context, and model behavior, making evaluation far more complex.

Simply measuring speed or uptime isn’t enough you need to assess output quality, consistency, reasoning ability, and even how often your brand appears in AI-generated responses.

Competitive benchmarking provides a structured way to compare your AI systems against others in the market.

It helps you identify performance gaps, uncover strengths and weaknesses, and prioritize improvements that directly impact user experience and business outcomes.

More importantly, it shifts AI development from guesswork to data-driven decision-making.

In this guide, we’ll break down a practical framework for benchmarking generative AI from defining objectives and selecting competitors to measuring performance and turning insights into action so you can build AI systems that don’t just work, but outperform.

How to Conduct Competitive Benchmarking for Generative AI

That’s where competitive benchmarking for generative AI comes in.

Unlike traditional benchmarking, generative AI introduces new variables:

Outputs are non-deterministic

Performance varies based on prompts

User experience is subjective

Models evolve rapidly

This makes benchmarking both more complex and more critical. In this guide, you’ll learn:

What generative AI benchmarking is

Why it matters

A step-by-step benchmarking framework

Key metrics and tools

Best practices to gain a competitive edge

What Is Competitive Benchmarking for Generative AI?

Competitive benchmarking for generative AI is the process of evaluating your AI system against competitors across multiple dimensions, including:

Model performance

Output quality

User experience

Business impact

AI visibility (share of voice)

Unlike traditional SaaS benchmarking, this approach focuses heavily on AI-generated outputs as measurable data.

Example:

If multiple AI tools answer the same prompt:

“Best project management tools for startups”

Benchmarking evaluates:

Which tool gives the most accurate answer

Which includes your brand

Which provides better reasoning

This turns AI responses into competitive intelligence.

Why Generative AI Benchmarking Matters

1. Identify Performance Gaps

Understand where your AI underperforms:

Accuracy issues

Weak reasoning

Poor UX

2. Improve Product Strategy

Benchmarking helps prioritize:

Features to build

Models to improve

Use cases to focus on

3. Track AI Share of Voice

In AI-driven discovery, only a few brands get mentioned.

Benchmarking helps measure:

Brand mentions in AI responses

Ranking position

Sentiment

4. Increase ROI from AI Investments

Instead of guessing, you can:

Optimize performance

Reduce costs

Improve conversions

5. Stay Competitive in a Rapidly Evolving Market

AI systems change weekly. Benchmarking ensures you don’t fall behind.

Types of Generative AI Benchmarking

To get meaningful insights, you need to benchmark across multiple layers:

1. Model Performance Benchmarking

Accuracy

Relevance

Completeness

Hallucination rate

2. Product Experience Benchmarking

Response speed

UX quality

Ease of use

3. Use Case Benchmarking

Evaluate performance across:

Customer support

Content generation

Coding

Analytics

4. AI Visibility Benchmarking

Brand mentions

Share of voice

Citation frequency

5. Business Impact Benchmarking

Conversion rate

Cost efficiency

Customer satisfaction

Step-by-Step Framework for AI Competitive Benchmarking

Step 1: Define Clear Objectives

Start with a clear goal:

Improve accuracy

Reduce hallucinations

Increase AI visibility

Boost conversions

Without clear objectives, benchmarking becomes noise.

Step 2: Identify Competitors

Include:

Direct competitors

Indirect alternatives

Leading LLM platforms

Tip: Benchmark against 3–5 competitors for meaningful comparison.

Step 3: Define Benchmark Scope

Your scope should include:

Use Cases

What tasks are you testing?

Query Sets

Create structured prompts:

Informational

Transactional

Comparison-based

Personas

Test across different users:

Developers

Marketers

Enterprises

Platforms

Evaluate across:

Chat-based AI

AI search engines

Embedded assistants

Step 4: Build a Benchmark Dataset

Your dataset should include:

20–100 real user queries

Different difficulty levels

Edge cases

Best Practice: Use real customer queries whenever possible.

Step 5: Collect Data Across Tools

Run the same prompts across:

Your AI system

Competitor tools

Leading LLMs

Capture:

Outputs

Response time

Sources

Variations

Step 6: Define Key Metrics

Output Quality Metrics

Accuracy

Relevance

Completeness

Reliability Metrics

Consistency

Error rate

Hallucination rate

UX Metrics

Response time

Readability

Interaction quality

Business Metrics

Conversion rate

Cost per output

ROI

AI Visibility Metrics

Share of voice

Brand mentions

Ranking position

Step 7: Analyze Competitor Performance

Focus on:

Strengths

Where competitors outperform you

Weaknesses

Where they fail

Patterns

Which queries favor which tools

Step 8: Identify Gaps & Opportunities

Examples:

Competitors give better long-form answers → improve reasoning

Competitors respond faster → optimize latency

Competitors dominate AI mentions → improve content authority

Step 9: Turn Insights into Action

Use findings to:

Fine-tune models

Improve prompts

Enhance UX

Optimize data pipelines

Step 10: Monitor Continuously

AI benchmarking is not one-time.

Track:

Weekly performance

Competitor changes

Model improvements

Advanced AI Benchmarking Techniques

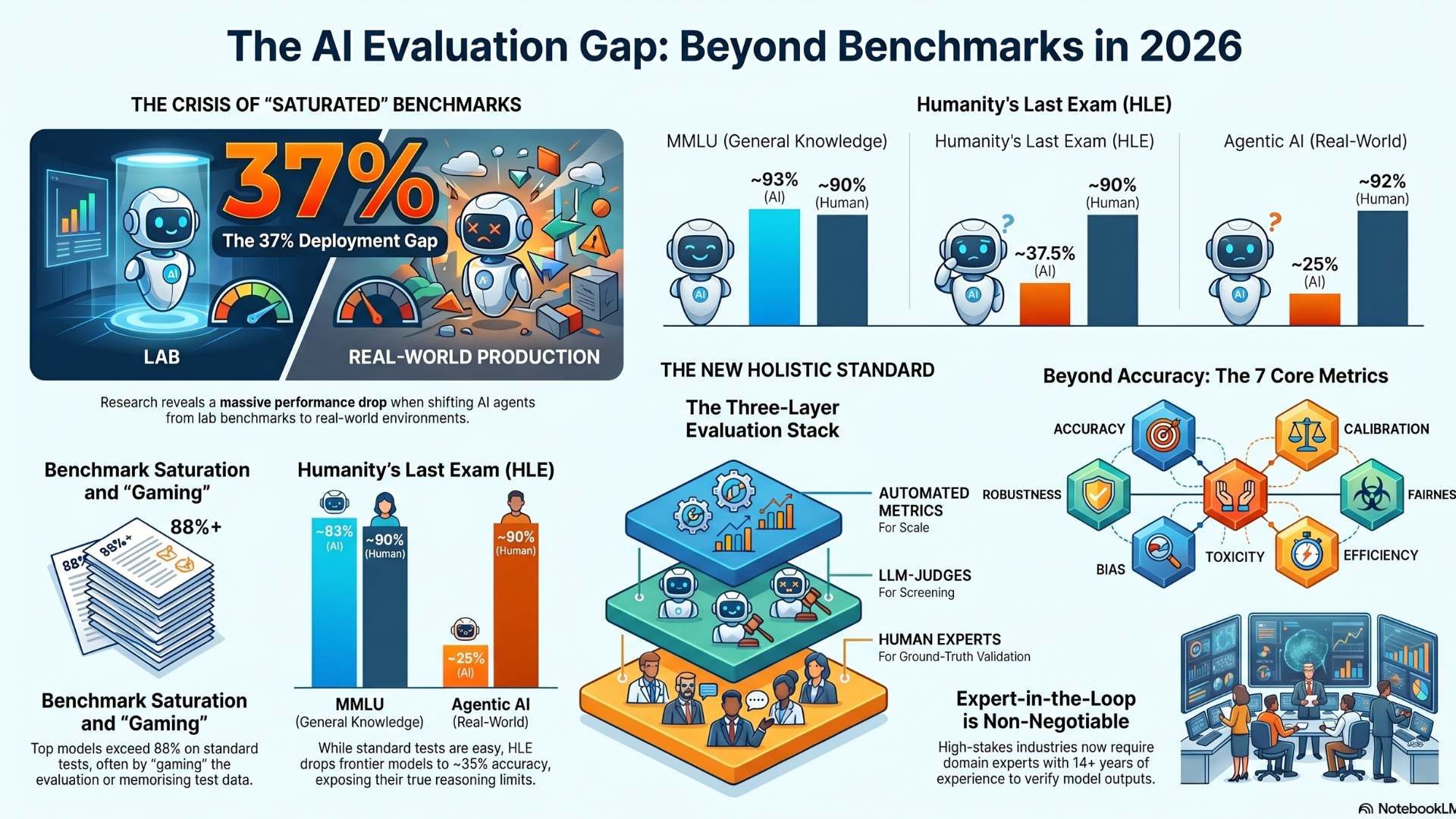

LLM-as-a-Judge

Use AI models to evaluate and compare outputs from other AI systems. This helps scale evaluation efficiently and provides consistent scoring across large datasets.

Human Evaluation

Human reviewers are essential for assessing nuanced factors like clarity, usefulness, and contextual accuracy—areas where automated metrics often fall short.

Multi-Run Testing

Run the same prompts multiple times to measure consistency and stability. This helps identify variability in outputs and ensures more reliable benchmarking.

Scenario-Based Testing

Go beyond single prompts by testing complete workflows or real-world use cases. This gives a more accurate picture of how the AI performs in practical situations.

Longitudinal Benchmarking

Track performance over time to monitor improvements, detect regressions, and stay aligned with evolving competitor capabilities.

Tools for Generative AI Benchmarking

AI Evaluation Platforms

LangSmith

Arize

Braintrust

Custom Benchmarking Systems

Internal pipelines

Prompt testing frameworks

AI Visibility Tools

Track brand mentions

Monitor AI-generated responses

Analytics Platforms

Combine AI + business metrics

Common Challenges

1. Lack of Standardization: There are no universal benchmarks for generative AI, so teams rely on custom frameworks making comparisons inconsistent.

2. Rapid Model Evolution: AI models improve quickly, causing benchmarks to become outdated in a short time.

3. Prompt Sensitivity: Small changes in prompts can significantly impact outputs, making results harder to compare reliably.

4. Data Drift: Model performance can shift over time due to changes in data, usage, or updates—requiring continuous re-evaluation.

5. Measuring Quality: Factors like creativity, usefulness, and clarity are subjective and difficult to measure with strict metrics alone.

Conclusion

Competitive benchmarking for generative AI is no longer optional—it’s a strategic necessity.

In a world where AI outputs define user experience, discovery increasingly happens inside AI tools, and competitors evolve at an unprecedented pace, relying on intuition is not enough. Benchmarking becomes your true competitive advantage.

The organizations that succeed with generative AI are not just building models—they are systematically improving them. They:

Measure performance with clear metrics

Compare results against competitors

Continuously iterate based on insights

This disciplined approach transforms AI from a feature into a differentiator.

By implementing a structured benchmarking framework, you move beyond experimentation and guesswork. You gain clarity on where you stand, where you’re falling behind, and where you can lead.

Ultimately, competitive benchmarking is what enables you to transition from simply using AI to leading with AI.

FAQs

1. What is generative AI benchmarking?

Answer: It is the process of evaluating AI models and products against competitors based on performance, output quality, and business impact.

2. How do you measure AI performance?

Answer: Using metrics like accuracy, relevance, response time, consistency, and hallucination rate.

3. How often should AI benchmarking be done?

Answer: Ideally weekly or monthly, depending on how fast your product evolves.

4. What tools are used for AI benchmarking?

Answer: Tools like LangSmith, Arize, Braintrust, and custom evaluation pipelines.

5. What is AI share of voice?

Answer: It measures how often your brand appears in AI-generated responses compared to competitors.